News

As described in the Summer 2014 issue of Topics, Harvard researchers are pushing the limits of computing power to achieve new breakthroughs in science and engineering. What will high-performance computing mean for you?

Sustainable energy

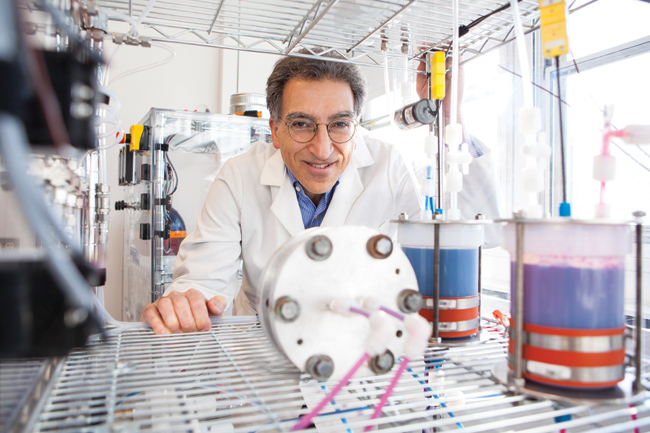

To select the best chemicals for use in a flow battery, the researchers relied on high-performance computing power. (Photo by Eliza Grinnell.)

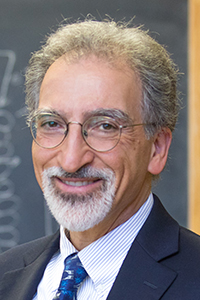

A sophisticated computational method called quantum Monte Carlo is at the heart of the physical chemistry research conducted by Alán Aspuru-Guzik, Professor of Chemistry and Chemical Biology. Running calculations on Harvard’s Orgoglio cluster of parallel graphics processing units (GPUs)—theoretically capable of up to 9.6 teraflops—Aspuru-Guzik’s team can determine the electronic properties of individual molecules with an extremely high level of accuracy, in less than a day.

Using similar techniques, his group has analyzed a thousand kinds of quinones (a class of organic molecule) in search of the best candidates to store energy in a new type of battery.

This organic flow battery, recently designed, built, and tested by Michael J. Aziz, Gene and Tracy Sykes Professor of Materials and Energy Technologies, and Roy G. Gordon, Thomas Dudley Cabot Professor of Chemistry and Professor of Materials Science, could fundamentally transform our nation’s electricity grid, enabling an affordable and continuous supply of power from wind and solar farms—even when the wind isn’t blowing and the sun isn’t shining.

Self-knowledge

Researchers collaborating on the Connectome Project at the Center for Brain Science aim to create a complete wiring diagram of all the neurons in a healthy human brain. Such a map would empower neuroscientists to study the causes and mechanisms of thought, behavior, memory, aging, and mental illness—knowledge that could fundamentally alter our conception of “self.”

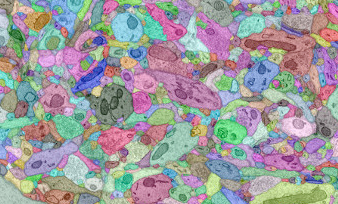

A cross-section of neurons in the brain. (Image courtesy of Hanspeter Pfister.)

A cross-section of neurons in the brain. (Image courtesy of Hanspeter Pfister.)

To build the Connectome, one strategy involves capturing electron micrographs of very thin slices of brain tissue and then reassembling those images into a three-dimensional model. However, these images are so detailed, and the slices so thin, that digitally manipulating them requires a new type of software that can focus on very small portions at a time. Hanspeter Pfister, An Wang Professor of Computer Science at SEAS, has been creating this tool.

Better health care

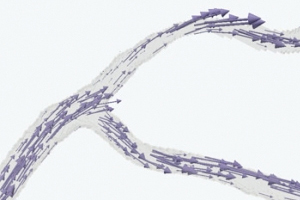

A vector field representation of blood flow. (Image courtesy of the Multiscale Hemodynamics Project.)

A vector field representation of blood flow. (Image courtesy of the Multiscale Hemodynamics Project.)

The Multiscale Hemodynamics Project is using advanced computational techniques and visual simulations to understand how red blood cells and other suspended particles flow through the human circulatory system.

Because anomalies in blood flow can indicate blockages or stress on arterial walls, a real-time clinical application of this system could one day help predict and prevent heart attacks.

Based at SEAS and led by Efthimios Kaxiras, John Hasbrouck Van Vleck Professor of Pure and Applied Physics, the project also involves researchers at Brigham and Women’s Hospital and the National Research Council of Italy.

A view of the universe

Harvard researchers and a team from California Institute of Technology (Caltech) built a new radio telescope in the Owens Valley of California with an eye on studying the first tens of million years after the Big Bang, before formation of the first stars, and in addition, bursts of energy from magnetic interactions between exoplanets and their parent stars. The combination of Caltech antennas and an innovative Harvard supercomputer sited in this remote mountain valley work together to take snapshots of the sky, horizon to horizon, every 9 seconds, generating a staggering 1 terabyte of data per hour.

A portion of the Owens Valley Long Wavelength Array that hosts LEDA. (Photo courtesy of Danny Price.)

A portion of the Owens Valley Long Wavelength Array that hosts LEDA. (Photo courtesy of Danny Price.)

The Large Aperture Experiment to Detect the Dark Age (LEDA), led by Lincoln J. Greenhill, a senior research fellow in Astronomy, uses commercial graphics processing units (GPUs) to create the supercomputer and process signals from 512 antennas instantaneously. In at least one sense this is the largest radio camera in the world. The GPU effort grew from a close cross-disciplinary collaboration with Profs. Pfister and Aspuru-Guzik that continues to today.

LEDA is a stepping stone to even larger experiments that would likely be in even more remote areas, far from man-made radio interference. Low power consumption and fast development times will be critically important, and the Harvard team has shown that the harnessing of GPUs provides the necessary efficiencies.

Continue, to read more of Topics

This is an excerpt from the Summer 2014 issue of Topics, a publication of the Harvard School of Engineering and Applied Sciences. For related content, visit http://seas.harvard.edu/topics.

Topics: Computer Science

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Scientist Profiles

Michael J. Aziz

Gene and Tracy Sykes Professor of Materials and Energy Technologies

Hanspeter Pfister

An Wang Professor of Computer Science

Efthimios Kaxiras

John Hasbrouck Van Vleck Professor of Pure and Applied Physics