News

Jumping spiders

Jumping spiders have evolved an efficient depth perception system, allowing them to accurately pounce on unsuspecting targets from several body lengths away. (Video courtesy of Paul Shamble, Tsevi Beatus, Itai Cohen, and Ron Hoy)

For all our technological advances, nothing beats evolution when it comes to research and development. Take jumping spiders. These small arachnids have impressive depth perception despite their tiny brains, allowing them to accurately pounce on unsuspecting targets from several body lengths away.

Inspired by these spiders, researchers at the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) have developed a compact and efficient depth sensor that could be used on board microrobots, in small wearable devices, or in lightweight virtual and augmented reality headsets. The device combines a multifunctional, flat metalens with an ultra-efficient algorithm to measure depth in a single shot.

“Evolution has produced a wide variety of optical configurations and vision systems that are tailored to different purposes,” said Zhujun Shi, a Ph.D. candidate in the Department of Physics and co-first author of the paper. “Optical design and nanotechnology are finally allowing us to explore artificial depth sensors and other vision systems that are similarly diverse and effective.”

The research is published in Proceedings of the National Academy of Sciences (PNAS).

Many of today’s depth sensors, such as those in phones, cars and video game consoles, use integrated light sources and multiple cameras to measure distance. Face ID on a smartphone, for example, uses thousands of laser dots to map the contours of the face. This works for large devices with room for batteries and fast computers, but what about small devices with limited power and computation, like smart watches or microrobots?

Evolution, as it turns out, provides a lot of options.

Humans measure depth using stereo vision, meaning when we look at an object, each of our two eyes is collecting a slightly different image. Try this: hold a finger directly in front of your face and alternate opening and closing each of your eyes. See how your finger moves? Our brains take those two images, examine them pixel by pixel and, based on how the pixels shift, calculates the distance to the finger.

“That matching calculation, where you take two images and perform a search for the parts that correspond, is computationally burdensome,” said Todd Zickler, the William and Ami Kuan Danoff Professor of Electrical Engineering and Computer Science at SEAS and co-senior author of the study. “Humans have a nice, big brain for those computations but spiders don’t.”

Metalens depth sensor

The video shows the metalens depth sensor working in real-time to capture the depth of fruit flies. The two images on the left are the raw images captured on the camera sensor. They are formed by the metalens and are blurred slightly differently. From these two images, the researchers compute the depth of the objects in real time. The image on the right shows the computed depth map. (Video courtesy of Qi Guo and Zhujun Shi/Harvard University)

Jumping spiders have evolved a more efficient system to measure depth. Each principal eye has a few semi-transparent retinae arranged in layers, and these retinae measure multiple images with different amounts of blur. For example, if a jumping spider looks at a fruit fly with one of its principal eyes, the fly will appear sharper in one retina’s image and blurrier in another. This change in blur encodes information about the distance to the fly.

In computer vision, this type of distance calculation is known as depth from defocus. But so far, replicating Nature has required large cameras with motorized internal components that can capture differently-focused images over time. This limits the speed and practical applications of the sensor.

That’s where the metalens comes in.

Federico Capasso, the Robert L. Wallace Professor of Applied Physics and Vinton Hayes Senior Research Fellow in Electrical Engineering at SEAS and co-senior author of the paper, and his lab have already demonstrated metalenses that can simultaneously produce several images containing different information. Building off that research, the team designed a metalens that can simultaneously produce two images with different blur.

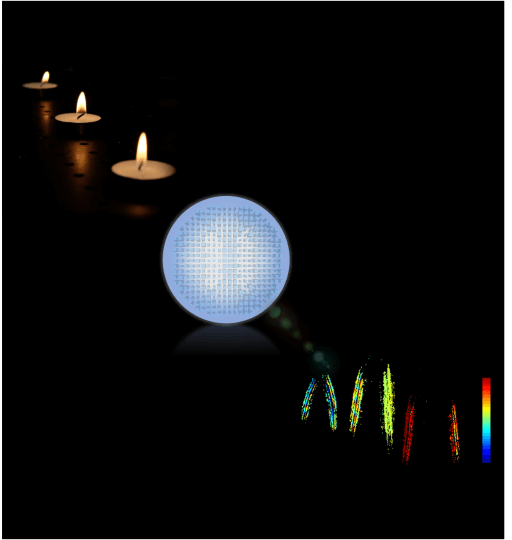

Spider-inspired metalens

The flat metalens (shown in the middle) captures images of a 3D scene, for example candle flames placed at different locations (left), and produces a depth map (right) using an efficient computer vision algorithm which is inspired by the eye of jumping spiders. The color on the depth map represents object distance. The closer and farther objects are colored red and blue respectively. (Image courtesy of Qi Guo and Zhujun Shi/Harvard University)

“Instead of using layered retina to capture multiple simultaneous images, as jumping spiders do, the metalens splits the light and forms two differently-defocused images side-by-side on a photosensor,” said Shi, who is part of Capasso’s lab.

An ultra-efficient algorithm, developed by Zickler’s group, then interprets the two images and builds a depth map to represent object distance.

“Being able to design metasurfaces and computational algorithms together is very exciting,” said Qi Guo, a Ph.D. candidate in Zickler’s lab and co-first author of the paper. “This is new way of creating computational sensors, and it opens the door to many possibilities.”

“Metalenses are a game changing technology because of their ability to implement existing and new optical functions much more effciently, faster and with much less bulk and complexity than existing lenses,” said Capasso. “Fusing breakthroughs in optical design and computational imaging has led us to this new depth camera that will open up a broad range of opportunities in science and technology.”

This paper was co-authored by Yao-Wei Huang, Emma Alexander, and Cheng-Wei Qiu, of the National University of Singapore. It was supported by Air Force Office of Scientific Research and US National Science Foundation.

Topics: Applied Physics, Computer Science, Electrical & Computer Engineering

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Scientist Profiles

Todd Zickler

William and Ami Kuan Danoff Professor of Electrical Engineering and Computer Science

Federico Capasso

Robert L. Wallace Professor of Applied Physics and Vinton Hayes Senior Research Fellow in Electrical Engineering

Press Contact

Leah Burrows | 617-496-1351 | lburrows@seas.harvard.edu